Physical reality is nothing but a bunch of spikes and pulses—or glitches. Take any smooth phenomenon, no matter how benign it might seem, and decompose it into an infinitely dense array of infinitesimally transient, infinitely high glitches. Then the sum of all glitches, weighted appropriately, becomes the phenomenon. This might be called the “glitch” function—but it is better known as Green’s function in honor of the ex-millwright George Green who taught himself mathematics at night to became one of England’s leading mathematicians of the age.

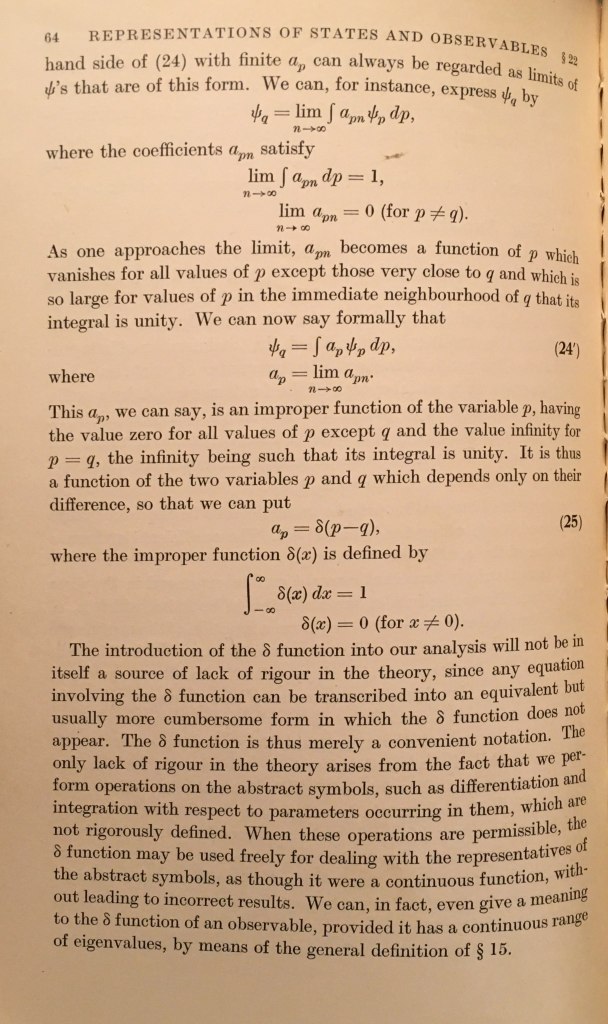

The δ function is thus merely a convenient notation … we perform operations on the abstract symbols, such as differentiation and integration …

PAM Dirac (1930)

The mathematics behind the “glitch” has a long history that began in the golden era of French analysis with the mathematicians Cauchy and Fourier, was employed by the electrical engineer Heaviside, and ultimately fell into the fertile hands of the quantum physicist, Paul Dirac, after whom it is named.

Augustin-Louis Cauchy (1815)

The French mathematician and physicist Augustin-Louis Cauchy (1789 – 1857) has lent his name to a wide array of theorems, proofs and laws that are still in use today. In mathematics, he was one of the first to establish “modern” functional analysis and especially complex analysis. In physics he established a rigorous foundation for elasticity theory (including the elastic properties of the so-called luminiferous ether).

In the early days of the 1800’s Cauchy was exploring how integrals could be used to define properties of functions. In modern terminology we would say that he was defining kernel integrals, where a function is integrated over a kernel to yield some property of the function.

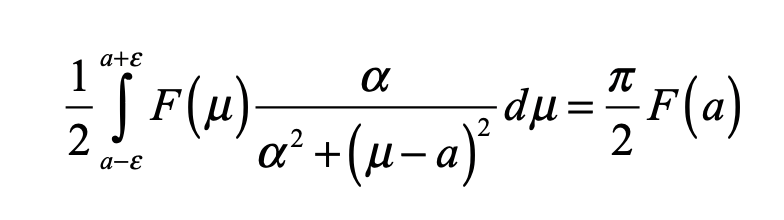

In 1815 Cauchy read before the Academy of Paris a paper with the long title “Theory of wave propagation on a surface of a fluid of indefinite weight”. The paper was not published until more than ten years later in 1827 by which time it had expanded to 300 pages and contained numerous footnotes. The thirteenth such footnote was titled “On definite integrals and the principal values of indefinite integrals” and it contained one of the first examples of what would later become known as a generalized distribution. The integral is a function F(μ) integrated over a kernel

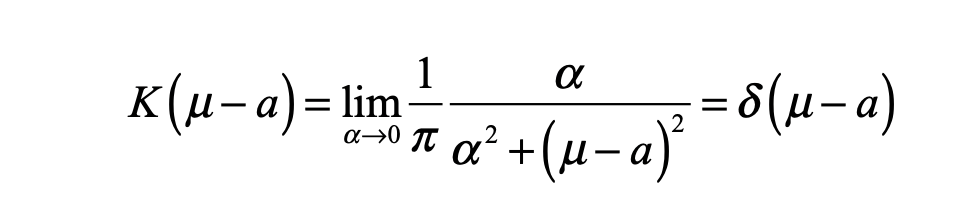

Cauchy lets the scale parameter α be “an infinitely small number”. The kernel is thus essentially zero for any values of μ “not too close to α”. Today, we would call the kernel given by

in the limit that α vanishes, “the delta function”.

Cauchy’s approach to the delta function is today one of the most commonly used descriptions of what a delta function is. It is not enough to simply say that a delta function is an infinitely narrow, infinitely high function whose integral is equal to unity. It helps to illustrate the behavior of the Cauchy function as α gets progressively smaller, as shown in Fig. 1.

In the limit as α approaches zero, the function grows progressively higher and progressively narrower, but the integral over the function remains unity.

Joseph Fourier (1822)

The delayed publication of Cauchy’s memoire kept it out of common knowledge, so it can be excused if Joseph Fourier (1768 – 1830) may not have known of it by the time he published his monumental work on heat in 1822. Perhaps this is why Fourier’s approach to the delta function was also different than Cauchy’s.

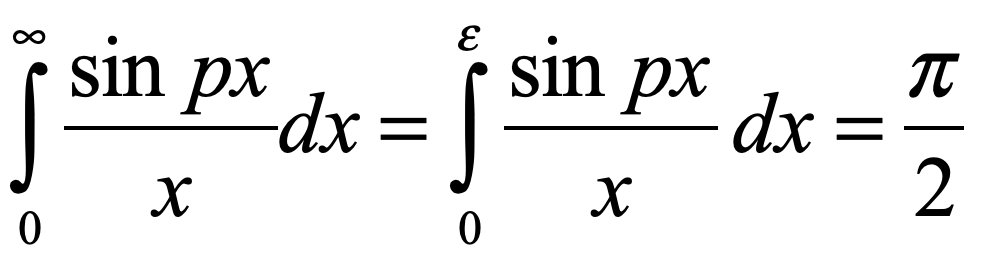

Fourier noted that an integral over a sinusoidal function, as the argument of the sinusoidal function went to infinity, became independent of the limits of integration. He showed

when ε << 1/p as p went to infinity. In modern notation, this would be the delta function defined through the “sinc” function

and Fourier noted that integrating this form over another function f(x) yielded the value of the function f(α) evaluated at α, rediscovering the results of Cauchy, but using a sinc(x) function in Fig. 2 instead of the Cauchy function of Fig. 1.

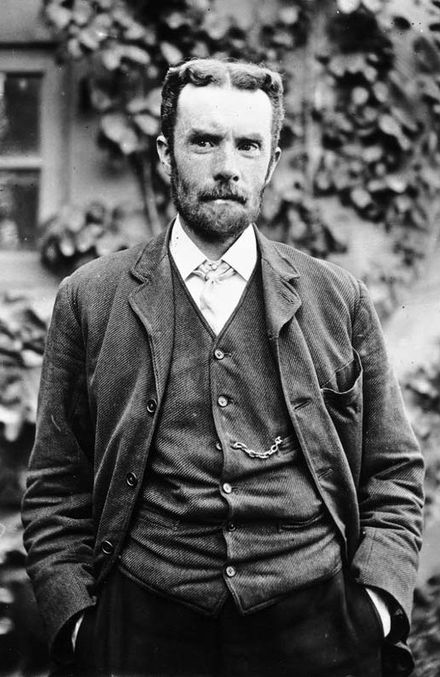

George Green’s Function (1829)

A history of the delta function cannot be complete without mention of George Green, one of the most remarkable British mathematicians of the 1800’s. He was a miller’s son who had only one year of education and spent most of his early life tending to his father’s mill. In his spare time, and to cut the tedium of his work, he read the most up-to-date work of the French mathematicians, reading the papers of Cauchy and Poisson and Fourier, whose work far surpassed the British work at that time. Unbelievably, he mastered the material and developed new material of his own, that he eventually self published. This is the mathematical work that introduced the potential function and introduced fundamental solutions to unit sources—what today would be called point charges or delta functions. These fundamental solutions are equivalent to the modern Green’s function, although they were developed rigorously much later by Courant and Hilbert and by Kirchhoff.

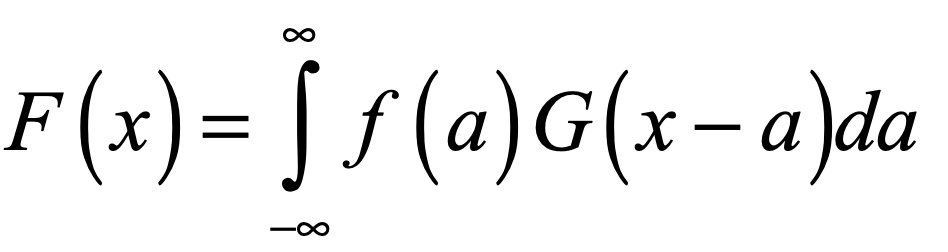

The modern idea of a Green’s function is simply the system response to a unit impulse—like throwing a pebble into a pond to launch expanding ripples or striking a bell. To obtain the solutions for a general impulse, one integrates over the fundamental solutions weighted by the strength of the impulse. If the system response to a delta function impulse at x = a, that is, a delta function δ(x-a), is G(x-a), then the response of the system to a distributed force f(x) is given by

where G(x-a) is called the Green’s function.

Oliver Heaviside (1893)

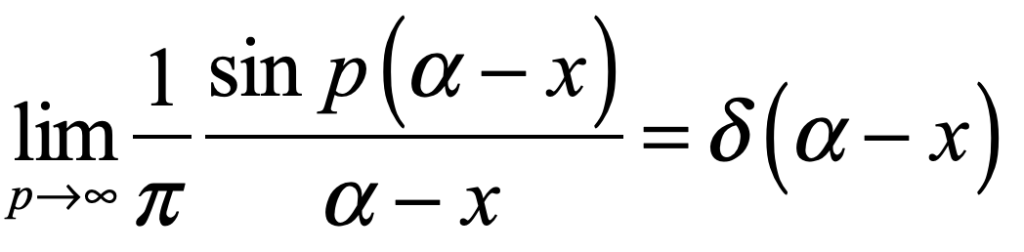

Oliver Heaviside (1850 – 1925) tended to follow his own path, independently of whatever the mathematicians were doing. Heaviside took particularly pragmatic approaches based on physical phenomena and how they might behave in an experiment. This is the context in which he introduced once again the delta function, unaware of the work of Cauchy or Fourier.

Heaviside was an engineer at heart who practiced his art by doing. He was not concerned with rigor, only with what works. This part of his personality may have been forged by his apprenticeship in telegraph technology helped by his uncle Charles Wheatstone (of the Wheatstone bridge). While still a young man, Heaviside tried to tackle Maxwell on his new treatise on electricity and magnetism, but he realized his mathematics were lacking, so he began a project of self education that took several years. The product of those years was his development of an idiosyncratic approach to electronics that may be best described as operator algebra. His algebra contained mis-behaved functions, such as the step function that was later named after him. It could also handle the derivative of the step function, which is yet another way of defining the delta function, though certainly not to the satisfaction of any rigorous mathematician—but it worked. The operator theory could even handle the derivative of the delta function.

Perhaps the most important influence by Heaviside was his connection of the delta function to Fourier integrals. He was one of the first to show that

which states that the Fourier transform of a delta function is a complex sinusoid, and the Fourier transform of a sinusoid is a delta function. Heaviside wrote several influential textbooks on his methods, and by the 1920’s these methods, including the Heaviside function and its derivative, had become standard parts of the engineer’s mathematical toolbox.

Given the work by Cauchy, Fourier, Green and Heaviside, what was left for Paul Dirac to do?

Paul Dirac (1930)

Paul Dirac (1902 – 1984) was given the moniker “The Strangest Man” by Niels Bohr during his visit to Copenhagen shortly after he had received his PhD. In part, this was because of Dirac’s internal intensity that could make him seem disconnected from those around him. When he was working on a problem in his head, it was not unusual for him to start walking, and by the time he he became aware of his surroundings again, he would have walked the length of the city of Copenhagen. And his solutions to problems were ingenious, breaking bold new ground where others, some of whom were geniuses themselves, were fumbling in the dark.

Among his many influential works—works that changed how physicists thought of and wrote about quantum systems—was his 1930 textbook on quantum mechanics. This was more than just a textbook, because it invented new methods by unifying the wave mechanics of Schrödinger with the matrix mechanics of Born and Heisenberg.

In particular, there had been a disconnect between bound electron states in a potential and free electron states scattering off of the potential. In the one case the states have a discrete spectrum, i.e. quantized, while in the other case the states have a continuous spectrum. There were standard quantum tools for decomposing discrete states by a projection onto eigenstates in Hilbert space, but an entirely different set of tools for handling the scattering states.

Yet Dirac saw a commonality between the two approaches. Specifically, eigenstate decomposition on the one hand used discrete sums of states, while scattering solutions on the other hand used integration over a continuum of states. In the first format, orthogonality was denoted by a Kronecker delta notation, but there was no equivalent in the continuum case—until Dirac introduced the delta function as a kernel in the integrand. In this way, the form of the equations with sums over states multiplied by Kronecker deltas took on the same form as integrals over states multiplied by the delta function.

In addition to introducing the delta function into the quantum formulas, Dirac also explored many of the properties and rules of the delta function. He was aware that the delta function was not a “proper” function, but by beginning with a simple integral property as a starting axiom, he could derive virtually all of the extended properties of the delta function, including properties of its derivatives.

Mathematicians, of course, were appalled and were quick to point out the insufficiency of the mathematical foundation for Dirac’s delta function, until the French mathematician Laurent Schwartz (1915 – 2002) developed the general theory of distributions in the 1940’s, which finally put the delta function in good standing.

Dirac’s introduction, development and use of the delta function was the first systematic definition of its properties. The earlier work by Cauchy, Fourier, Green and Heaviside had all touched upon the behavior of such “spiked” functions, but they had used it in passing. After Dirac, physicists embraced it as a powerful new tool in their toolbox, despite the lag in its formal acceptance by mathematicians, until the work of Schwartz redeemed it.

By David D. Nolte Feb. 17, 2022

Bibliography

V. Balakrishnan, “All about the Dirac Delta function(?)”, Resonance, Aug., pg. 48 (2003)

M. G. Katz. “Who Invented Dirac’s Delta Function?”, Semantic Scholar (2010).

J. Lützen, The prehistory of the theory of distributions. Studies in the history of mathematics and physical sciences ; 7 (Springer-Verlag, New York, 1982).