In the epilog of my book Mind at Light Speed: A New Kind of Intelligence (Free Press, 2001), I speculated about a future computer in which sheets of light interact with others to form new meanings and logical cascades as light makes decisions in a form of all-optical intelligence.

Twenty years later, that optical computer seems vaguely quaint, not because new technology has passed it by, like looking at the naïve musings of Jules Verne from our modern vantage point, but because the optical computer seems almost as far away now as it did back in 2001.

At the the turn of the Millennium we were seeing tremendous advances in data rates on fiber optics (see my previous Blog) as well as the development of new types of nonlinear optical devices and switches that served the role of rudimentary logic switches. At that time, it was not unreasonable to believe that the pace of progress would remain undiminished, and that by 2020 we would have all-optical computers and signal processors in which the same optical data on the communication fibers would be involved in the logic that told the data what to do and where to go—all without the wasteful and slow conversion to electronics and back again into photons—the infamous OEO conversion.

However, the rate of increase of the transmission bandwidth on fiber optic cables slowed not long after the publication of my book, and nonlinear optics today still needs high intensities to be efficient, which remains a challenge for significant (commercial) use of all-optical logic.

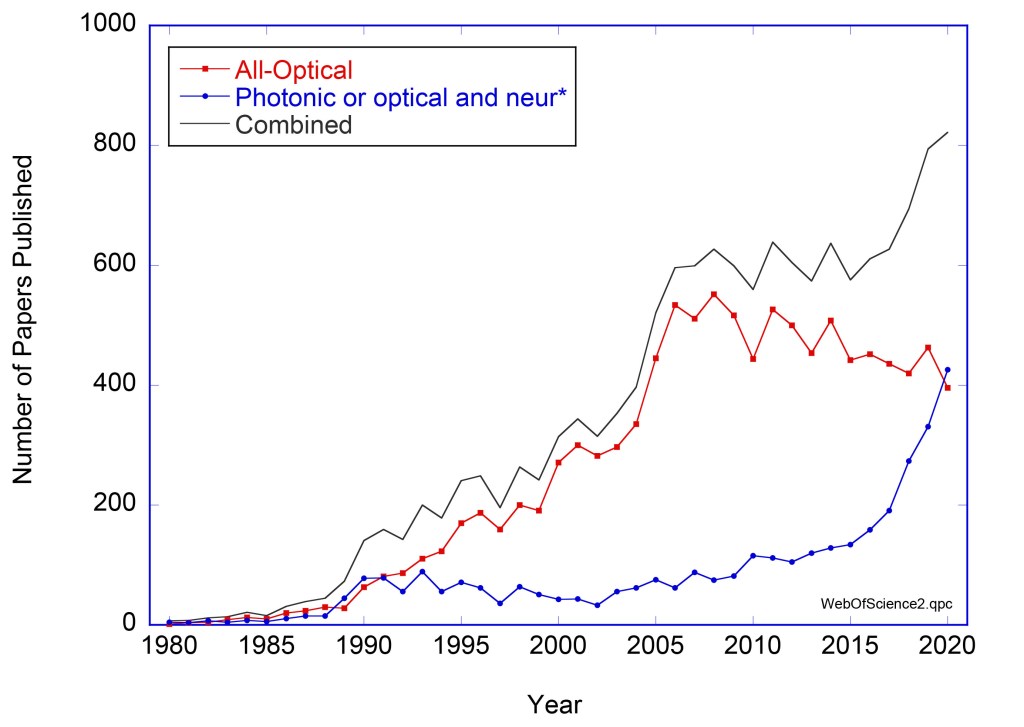

That said, it’s dangerous to ever say never, and research into all-optical computing and data processing is still going strong (See Fig. 1). It’s not the dream that was wrong, it was the time-scale that was wrong, just like fiber-to-the-home. Back in 2001, fiber-to-the-home was viewed as a pipe-dream by serious technology scouts. It took twenty years, but now that vision is coming true in urban settings. Back in 2001, all-optical computing seemed about 20 years away, but now it still looks 20 years out. Maybe this time the prediction is right. Recent advances in all-optical processing give some hope for it. Here are some of those advances.

The “What” and “Why” of All-Optical Processing

One of the great dreams of photonics is the use of light beams to perform optical logic in optical processors just as electronic currents perform electronic logic in transistors and integrated circuits.

Our information age, starting with the telegraph in the mid-1800’s, has been built upon electronics because the charge of the electron makes it a natural decision maker. Two charges attract or repel by Coulomb’s Law, exerting forces upon each other. Although we don’t think of currents acting in quite that way, the foundation of electronic logic remains electrical interactions.

But with these interactions also come constraints—constraining currents to be contained within wires, waiting for charging times that slow down decisions, managing electrical resistance and dissipation that generate heat (computer processing farms in some places today need to be cooled by glacier meltwater). Electronic computing is hardly a green technology.

Therefore, the advantages of optical logic are clear: broadcasting information without the need for expensive copper wires, little dissipation or heat, low latency (signals propagate at the speed of light). Furthermore, information on the internet is already in the optical domain, so why not keep it in the optical domain and have optical information packets making the decisions? All the routing and switching decisions about where optical information packets should go could be done by the optical packets themselves inside optical computers.

But there is a problem. Photons in free space don’t interact—they pass through each other unaffected. This is the opposite of what is needed for logic and decision making. The challenge of optical logic is then to find a way to get photons to interact.

Think of the scene in Star Wars: The New Hope when Obiwan Kenobi and Darth Vader battle to the death in a light saber duel—beams of light crashing against each other and repelling each other with equal and opposite forces. This is the photonic engineer’s dream! Light controlling light. But this cannot happen in free space. On the other hand, light beams can control other light beams inside nonlinear crystals where one light beam changes the optical properties of the crystal, hence changing how another light beam travels through it. These are nonlinear optical crystals.

Nonlinear Optics

Virtually all optical control designs, for any kind of optical logic or switch, require one light beam to affect the properties of another, and that requires an intervening medium that has nonlinear optical properties. The physics of nonlinear optics is actually simple: one light beam changes the electronic structure of a material which affects the propagation of another (or even the same) beam. The key parameter is the nonlinear coefficient that determines how intense the control beam needs to be to produce a significant modulation of the other beam. This is where the challenge is. Most materials have very small nonlinear coefficients, and the intensity of the control beam usually must be very high.

Therefore, to create low-power all-optical logic gates and switches there are four main design principles: 1) increase the nonlinear susceptibility by engineering the material, 2) increase the interaction length between the two beams, 3) concentrate light into small volumes, and 4) introduce feedback to boost the internal light intensities. Let’s take these points one at a time.

Nonlinear susceptibility: The key to getting stronger interaction of light with light is in the ease with which a control beam of light can distort the crystal so that the optical conditions change for a signal beam. This is called the nonlinear susceptibility . When working with “conventional” crystals like semiconductors (e.g. CdZnSe) or rare-Earths (e.g. LiNbO3), there is only so much engineering that is possible to try to tweak the nonlinear susceptibilities. However, artificially engineered materials can offer significant increases in nonlinear susceptibilities, these include plasmonic materials, metamaterials, organic semiconductors, photonic crystals. An increasingly important class of nonlinear optical devices are semiconductor optical amplifiers (SOA).

Interaction length: The interaction strength between two light waves is a product of the nonlinear polarization and the length over which the waves interact. Interaction lengths can be made relatively long in waveguides but can be made orders of magnitude longer in fibers. Therefore, nonlinear effects in fiber optics are a promising avenue for achieving optical logic.

Intensity Concentration: Nonlinear polarization is the product of the nonlinear susceptibility with the field amplitude of the waves. Therefore, focusing light down to small cross sections produces high power, as in the core of a fiber optic, again showing advantages of fibers for optical logic implementations.

Feedback: Feedback, as in a standing-wave cavity, increases the intensity as well as the effective interaction length by folding the light wave continually back on itself. Both of these effects boost the nonlinear interaction, but then there is an additional benefit: interferometry. Cavities, like a Fabry-Perot, are interferometers in which a slight change in the round-trip phase can produce large changes in output light intensity. This is an optical analog to a transistor in which a small control current acts as a gate for an exponential signal current. The feedback in the cavity of a semiconductor optical amplifier (SOA), with high internal intensities and long effective interaction lengths and an active medium with strong nonlinearity make these elements attractive for optical logic gates. Similarly, integrated ring resonators have the advantage of interferometric control for light switching. Many current optical switches and logic gates are based on SOAs and integrated ring resonators.

All-Optical Regeneration

The vision of the all-optical internet, where the logic operations that direct information to different locations is all performed by optical logic without ever converting into the electrical domain, is facing a barrier that is as challenging to overcome today as it was back in 2001: all-optical regeneration. All-optical regeneration has been and remains the Achilles Heal of the all-optical internet.

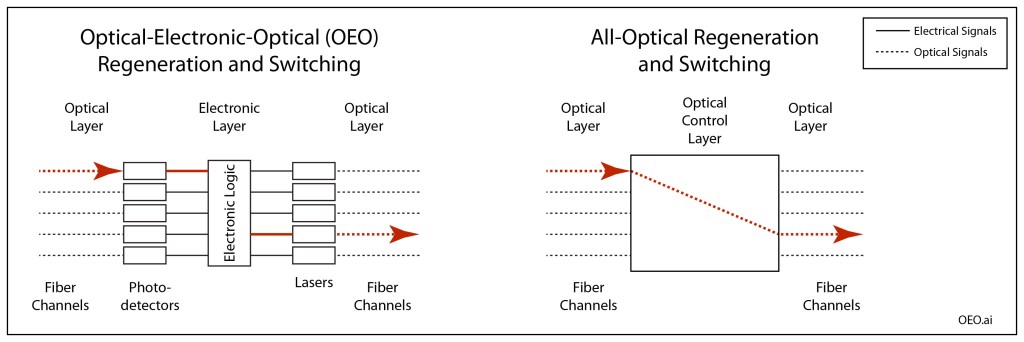

Signal regeneration is currently performed through OEO conversion: Optical-to-Electronic-to-Optical. In OEO conversion, a distorted signal (distortion is caused by attenuation and dispersion and noise as signals travel down fiber optics) is received by a photodetector, is interpreted as ones and zeros that drive laser light sources that launch the optical pulses down the next stretch of fiber. The new pulses are virtually perfect, but they again degrade as they travel, until they are regenerated, and so on. The added advantage of the electrical layer is that the electronic signals can be used to drive conventional electronic logic for switching.

In all-optical regeneration, on the other hand, the optical pulses need to be reamplified, reshaped and retimed––known as 3R regeneration––all by sending the signal pulses through nonlinear amplifiers and mixers, which may include short stretches of highly nonlinear fiber (HNLF) or semiconductor optical amplifiers (SOA). There have been demonstrations of 2R all-optical regeneration (reamplifying and reshaping but not retiming) at lower data rates, but getting all 3Rs at the high data rates (40 Gb/s) in the next generation telecom systems remains elusive.

Nonetheless, there is an active academic literature that is pushing the envelope on optical logical devices and regenerators [1]. Many of the systems focus on SOA’s, HNLF’s and Interferometers. Numerical modeling of these kinds of devices is currently ahead of bench-top demonstrations, primarily because of the difficulty of fabrication and device lifetime. But the numerical models point to performance that would be competitive with OEO. If this OOO conversion (Optical-to-Optical-to-Optical) is scalable (can handle increasing bit rates and increasing numbers of channels), then the current data crunch that is facing the telecom trunk lines (see my previous Blog) may be a strong driver to implement such all-optical solutions.

It is important to keep in mind that legacy technology is not static but also continues to improve. As all-optical logic and switching and regeneration make progress, OEO conversion gets incrementally faster, creating a moving target. Therefore, we will need to wait another 20 years to see whether OEO is overtaken and replaced by all-optical.

Photonic Neural Networks

The most exciting area of optical logic today is in analog optical computing––specifically optical neural networks and photonic neuromorphic computing [2, 3]. A neural network is a highly-connected network of nodes and links in which information is distributed across the network in much the same way that information is distributed and processed in the brain. Neural networks can take several forms––from digital neural networks that are implemented with software on conventional digital computers, to analog neural networks implemented in specialized hardware, sometimes also called neuromorphic computing systems.

Optics and photonics are well suited to the analog form of neural network because of the superior ability of light to form free-space interconnects (links) among a high number of optical modes (nodes). This essential advantage of light for photonic neural networks was first demonstrated in the mid-1980’s using recurrent neural network architectures implemented in photorefractive (nonlinear optical) crystals (see Fig. 1 for a publication timeline). But this initial period of proof-of-principle was followed by a lag of about 2 decades due to a mismatch between driver applications (like high-speed logic on an all-optical internet) and the ability to configure the highly complex interconnects needed to perform the complex computations.

The rapid rise of deep machine learning over the past 5 years has removed this bottleneck, and there has subsequently been a major increase in optical implementations of neural networks. In particular, it is now possible to use conventional deep machine learning to design the interconnects of analog optical neural networks for fixed tasks such as image recognition [4]. At first look, this seems like a non-starter, because one might ask why not use the conventional trained deep network to do the recognition itself rather than using it to create a special-purpose optical recognition system. The answer lies primarily in the metrics of latency (speed) and energy cost.

In neural computing, approximately 90% of the time and energy go into matrix multiplication operations. Deep learning algorithms driving conventional digital computers need to do the multiplications at the sequential clock rate of the computer using nested loops. Optics, on the other had, is ideally suited to perform matrix multiplications in a fully parallel manner (see Fig. 4). In addition, a hardware implementation using optics operates literally at the speed of light. The latency is limited only by the time of flight through the optical system. If the optical train is 1 meter, then the time for the complete computation is only a few nanoseconds at almost no energy dissipation. Combining the natural parallelism of light with the speed has led to unprecedented computational rates. For instance, recent implementations of photonic neural networks have demonstrated over 10 Trillion operations per second (TOPS) [5].

It is important to keep in mind that although many of these photonic neural networks are characterized as all-optical, they are generally not reconfigurable, meaning that they are not adaptive to changing or evolving training sets or changing input information. Most adaptive systems use OEO conversion with electronically-addressed spatial light modulators (SLM) that are driven by digital logic. Another technology gaining recent traction is neuromorphic photonics in which neural processing is implemented on photonic integrated circuits (PICS) with OEO conversion. The integration of large numbers of light emitting sources on PICs is now routine, relieving the OEO bottleneck as electronics and photonics merge in silicon photonics.

Farther afield are all-optical systems that are adaptive through the use of optically-addressed spatial light modulators or nonlinear materials. In fact, these types of adaptive all-optical neural networks were among the first demonstrated in the late 1980’s. More recently, advanced adaptive optical materials, as well as fiber delay lines for a type of recurrent neural network known as reservoir computing, have been used to implement faster and more efficient optical nonlinearities needed for adaptive updates of neural weights. But there are still years to go before light is adaptively controlling light entirely in the optical domain at the speeds and with the flexibility needed for real-world applications like photonic packet switching in telecom fiber-optic routers.

In stark contrast to the status of classical all-optical computing, photonic quantum computing is on the cusp of revolutionizing the field of quantum information science. The recent demonstration from the Canadian company Xanadu of a programmable photonic quantum computer that operates at room temperature may be the harbinger of what is to come in the third generation Machines of Light: Quantum Optical Computers, which is the topic of my next blog.

By David D. Nolte, Nov. 28, 2021

Further Reading

[1] V. Sasikala and K. Chitra, “All optical switching and associated technologies: a review,” Journal of Optics-India, vol. 47, no. 3, pp. 307-317, Sep (2018)

[2] C. Huang et a., “Prospects and applications of photonic neural networks,” Advances in Physics-X, vol. 7, no. 1, Jan (2022), Art no. 1981155

[3] G. Wetzstein, A. Ozcan, S. Gigan, S. H. Fan, D. Englund, M. Soljacic, C. Denz, D. A. B. Miller, and D. Psaltis, “Inference in artificial intelligence with deep optics and photonics,” Nature, vol. 588, no. 7836, pp. 39-47, Dec (2020)

[4] X. Lin, Y. Rivenson, N. T. Yardimei, M. Veli, Y. Luo, M. Jarrahi, and A. Ozcan, “All-optical machine learning using diffractive deep neural networks,” Science, vol. 361, no. 6406, pp. 1004-+, Sep (2018)

[5] X. Y. Xu, M. X. Tan, B. Corcoran, J. Y. Wu, A. Boes, T. G. Nguyen, S. T. Chu, B. E. Little, D. G. Hicks, R. Morandotti, A. Mitchell, and D. J. Moss, “11 TOPS photonic convolutional accelerator for optical neural networks,” Nature, vol. 589, no. 7840, pp. 44-+, Jan (2021)