Twenty years ago this November, my book Mind at Light Speed: A New Kind of Intelligence was published by The Free Press (Simon & Schuster, 2001). The book described the state of optical science at the turn of the Millennium through three generations of Machines of Light: The Optoelectronic Generation of electronic control meshed with photonic communication; The All-Optical Generation of optical logic; and The Quantum Optical Generation of quantum communication and computing.

To mark the occasion of the publication, this Blog Post begins a three-part series that updates the state-of-the-art of optical technology, looking at the advances in optical science and technology over the past 20 years since the publication of Mind at Light Speed. This first blog reviews fiber optics and the photonic internet. The second blog reviews all-optical communication and computing. The third and final blog reviews the current state of photonic quantum communication and computing.

The Wabash Yacht Club

During late 1999 and early 2000, while I was writing Mind at Light Speed, my wife Laura and I would often have lunch at the ironically-named Wabash Yacht Club. Not only was it not a Yacht Club, but it was a dark and dingy college-town bar located in a drab 70-‘s era plaza in West Lafayette, Indiana, far from any navigable body of water. But it had a great garlic burger and we loved the atmosphere.

One of the TV monitors in the bar was always tuned to a station that covered stock news, and almost every day we would watch the NASDAQ rise 100 points just over lunch. This was the time of the great dot-com stock-market bubble—one of the greatest speculative bubbles in the history of world economics. In the second quarter of 2000, total US venture capital investments exceeded $30B as everyone chased the revolution in consumer market economics.

Fiber optics will remain the core technology of the internet for the foreseeable future.

Part of that dot-com bubble was a massive bubble in optical technology companies, because everyone knew that the dot-com era would ride on the back of fiber optics telecommunications. Fiber optics at that time had already revolutionized transatlantic telecommunications, and there seemed to be no obstacle for it to do the same land-side with fiber optics to every home bringing every dot-com product to every house and every movie ever made. What would make this possible was the tremendous information bandwidth that can be crammed into tiny glass fibers in the form of photon packets traveling at the speed of light.

Doing optics research at that time was a heady experience. My research on real-time optical holography was only on the fringe of optical communications, but at the CLEO conference on lasers and electro-optics, I was invited by tiny optics companies to giant parties, like a fully-catered sunset cruise on a schooner sailing Baltimore’s inner harbor. Venture capital scouts took me to dinner in San Francisco with an eye to scoop up whatever patents I could dream of. And this was just the side show. At the flagship fiber-optics conference, the Optical Fiber Conference (OFC) of the OSA, things were even crazier. One tiny company that made a simple optical switch went almost overnight from a company worth a couple of million to being bought out by Nortel (the giant Canadian telecommunications conglomerate of the day) for over 4 billion dollars.

The Telecom Bubble and Bust

On the other side from the small mom-and-pop optics companies were the giants like Corning (who made the glass for the glass fiber optics) and Nortel. At the height of the telecom bubble in September 2000, Nortel had a capitalization of almost $400B Canadian dollars due to massive speculation about the markets around fiber-optic networks.

One of the central questions of the optics bubble of Y2K was what the new internet market would look like. Back then, fiber was only beginning to connect to distribution nodes that were connected off the main cross-country trunk lines. Cable TV dominated the market with fixed programming where you had to watch whatever they transmitted whenever they transmitted it. Google was only 2 years old, and Youtube didn’t even exist then—it was founded in 2005. Everyone still shopped at malls, while Amazon had only gone public three years before.

There were fortune tellers who predicted that fiber-to-the-home would tap a vast market of online commerce where you could buy anything you wanted and have it delivered to your door. They foretold of movies-on-demand, where anyone could stream any movie they wanted at any time. They also foretold of phone calls and video chats that never went over the phone lines ruled by the telephone monopolies. The bandwidth, the data rates, that these markets would drive were astronomical. The only technology at that time that could support such high data rates was fiber optics.

At first, these fortune tellers drove an irrational exuberance. But as the stocks inflated, there were doomsayers who pointed out that the costs at that time of bringing fiber into homes was prohibitive. And the idea that people would be willing to pay for movies-on-demand was laughable. The cost of the equipment and the installation just didn’t match what then seemed to be a sparse market demand. Furthermore, the fiber technology in the year 2000 couldn’t even get to the kind of data rates that could support these dreams.

In March of 2000 the NASDAQ hit a high of 5000, and then the bottom fell out.

By November 2001 the NASDAQ had fallen to 1500. One of the worst cases of the telecom bust was Nortel whose capitalization plummeted from $400B at its high to $5B Canadian by August 2002. Other optics companies fared little better.

The main questions, as we stand now looking back from 20 years in the future, are: What in real life motivated the optics bubble of 2000? And how far has optical technology come since then? The surprising answer is that the promise of optics in 2000 was not wrong—the time scale was just off.

Fiber to the Home

Today, fixed last-mile broadband service is an assumed part of life in metro areas in the US. This broadband takes on three forms: legacy coaxial cable, 4G wireless soon to be upgraded to 5G, and fiber optics. There are arguments pro and con for each of these technologies, especially moving forward 10 or 20 years or more, and a lot is at stake. The global market revenue was $108 Billion in 2020 and is expected to reach $200 Billion in 2027, growing at over 9% from 2021 to 2027.

To sort through the pros and cons to pick the wining technology, several key performance parameters must be understood for each technology. The two most important performance measures are bandwidth and latency. Bandwidth is the data rate—how many bits per second can you get to the home. Latency is a little more subtle. It is the time it takes to complete a transmission. This time includes the actual time for information to travel from a transmitter to a receiver, but that is rarely the major contributor. Currently, almost all of the latency is caused by the logical operations needed to move the information onto and off of the home data links.

Coax (short for coaxial cable) is attractive because so much of the last-mile legacy hardware is based on the old cable services. But coax cable has very limited bandwidth and high latency. As a broadband technology, it is slowly disappearing.

Wireless is attractive because the information is transmitted in the open air without any need for physical wires or fibers. But high data rates require high frequency. For instance, 4G wireless operates at frequencies between 700 MHz to 2.6 GHz. Current WiFi is 2.4 GHz or 5 GHz, and next-generation 5G will have 26 GHz using millimeter wave technology, and WiGig is even more extreme at 60 GHz. While WiGig will deliver up to 10 Gbits per second, as everyone with wireless routers in their homes knows, the higher the frequency, the more it is blocked by walls or other obstacles. Even 5 GHz is mostly attenuated by walls, and the attenuation gets worse as the frequency gets higher. Testing of 5G networks has shown that cell towers need to be closely spaced to allow seamless coverage. And the crazy high frequency of WiGig all but guarantees that it will only be usable for line-of-sight communication within a home or in an enterprise setting.

Fiber for the last mile, on the other hand, has multiple advantages. Chief among these is that fiber is passive. It is a light pipe that has ten thousand times more usable bandwidth than a coaxial cable. For instance, lab tests have pushed up to 100 Tbit/sec over kilometers of fiber. To access that bandwidth, the input and output hardware can be continually upgraded, while the installed fiber is there to handle pretty much any amount of increasing data rates for the next 10 or 20 years. Fiber installed today is supporting 1 Gbit/sec data rates, and the existing protocol will work up to 10 Gbit/sec—data rates that can only be hoped for with WiFi. Furthermore, optical communications on fiber have latencies of around 1.5 msec over 20 kilometers compared with 4G LTE that has a latency of 8 msec over 1 mile. The much lower latency is key to support activities that cannot stand much delay, such as voice over IP, video chat, remote controlled robots, and virtual reality (i.e., gaming). On top of all of that, the internet technology up to the last mile is already almost all optical. So fiber just extends the current architecture across the last mile.

Therefore, fixed fiber last-mile broadband service is a technology winner. Though the costs can be higher than for WiFi or coax in the short run for installation, the long-run costs are lower when amortized over the lifetime of the installed fiber which can exceed 25 years.

It is becoming routine to have fiber-to-the-curb (FTTC) where a connection box converts photons in fibers into electrons on copper to take the information into the home. But a market also exists in urban settings for fiber-to-the-home (FTTH) where the fiber goes directly into the house to a receiver and only then would the information be converted from photons to electrons and electronics.

Shortly after Mind at Light Speed was published in 2001, I was called up by a reporter for the Seattle Times who wanted to know my thoughts about FTTH. When I extolled its virtue, he nearly hung up on me. He was in the middle of debunking the telecom bubble and his premise was that FTTH was a fraud. In 2001 he might have been right. But in 2021, FTTH is here, it is expanding, and it will continue to do so for at least another quarter century. Fiber to the home will become the legacy that some future disruptive technology will need to displace.

Trunk-Line Fiber Optics

Despite the rosy picture for Fiber to the Home, a storm is brewing for the optical trunk lines. The total traffic on the internet topped a billion Terrabytes in 2019 and is growing fast, doubling about every 2 years on an exponential growth curve. In 20 years, that becomes another factor of a thousand more traffic in 2040 than today. Therefore, the technology companies that manage and supply the internet worry about a capacity crunch that is fast approaching when there will be more demand than the internet can supply.

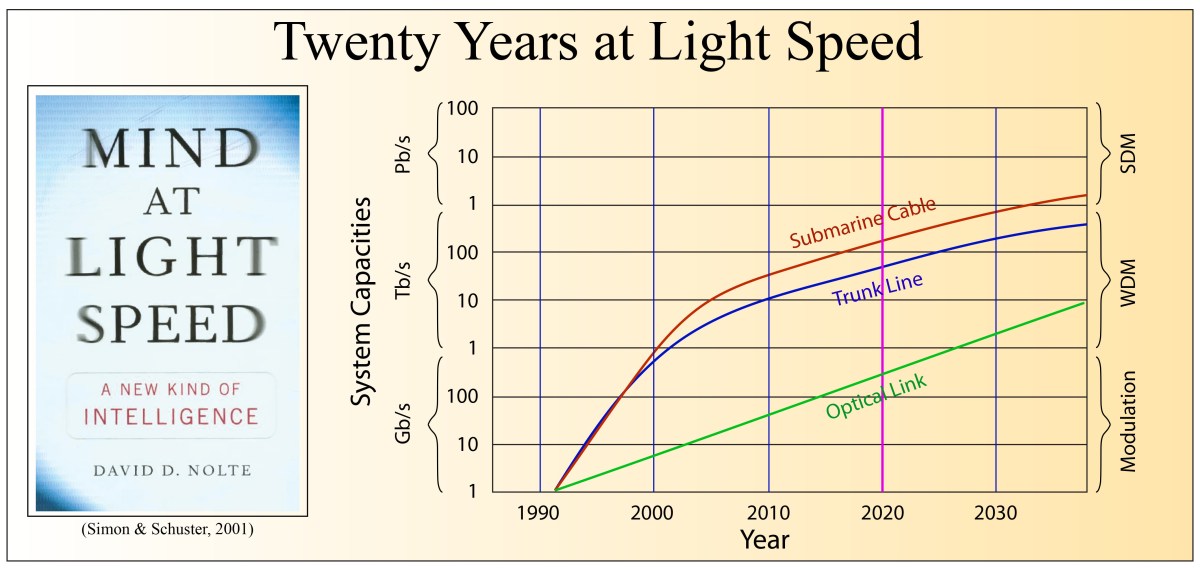

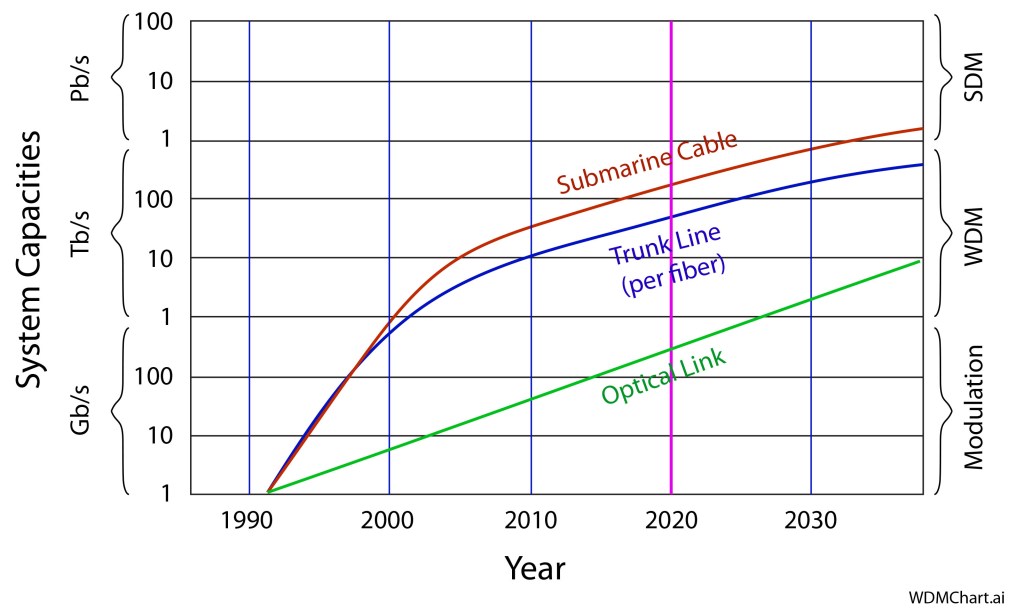

Over the past 20 years, the data rates on the fiber trunk lines—the major communication links that span the United States—matched demand by packing more bits in more ways into the fibers. Up to 2009, increased data rates were achieved using dispersion-managed wavelength-division multiplexing (WDM) which means that they kept adding more lasers of slightly different colors to send the optical bits down the fiber. For instance, in 2009 the commercial standard was 80 colors each running at 40 Gbit/sec for a total of 3.2 Tbit/sec down a single fiber.

Since 2009, increased bandwidth has been achieved through coherent WDM, where not only the amplitude of light but also the phase of the light is used to encode bits of information using interferometry. We are still in the coherent WDM era as improved signal processing is helping to fill the potential coherent bandwidth of a fiber. Commercial protocols using phase-shift keying, quadrature phase-shift keying, and 16-quadrature amplitude modulation currently support 50 Gbit/sec, 100 Gbit/sec and 200 Gbit/sec, respectively. But the capacity remaining is shrinking, and several years from now, a new era will need to begin in order to keep up with demand. But if fibers are already using time, color, polarization and phase to carry information, what is left?

The answer is space!

Coming soon will be commercial fiber trunk lines that use space-division multiplexing (SDM). The simplest form is already happening now as fiber bundles are replacing single-mode fibers. If you double the number of fibers in a cable, then you double the data rate of the cable. But the problem with this simple approach is the scaling. If you double just 10 times, then you need 1024 fibers in a single cable—each fiber needing its own hardware to launch the data and retrieve it at the other end. This is linear scaling, which is bad scaling for commercial endeavors.

Therefore, alternatives for tapping into SDM are being explored in lab demonstrations now that have sublinear scaling (costs don’t rise as fast as improved capacity). These include multi-element fibers where multiple fiber optical elements are manufactured as a group rather than individually and then combined into a cable. There are also multi-core fibers, where multiple fibers share the same cladding. These approaches provide multiple fibers for multiple channels without a proportional rise in cost.

More exciting are approaches that use few-mode-fibers (FMF) to support multiple spatial modes traveling simultaneously down the same fiber. In the same vein are coupled-core fibers which is a middle ground between multi-core fibers and few-mode fibers in that individual cores can interact within the cladding to support coupled spatial modes that can encode separate spatial channels. Finally, combinations of approaches can use multiple formats. For instance, a recent experiment combined FMF and MCF that used 19 cores each supporting 6 spatial modes for a total of 114 spatial channels.

However, space-division multiplexing has been under development for several years now, yet it has not fully moved into commercial systems. This may be a sign that the doubling rate of bandwidth may be starting to slow down, just as Moore’s Law slowed down for electronic chips. But there were doomsayers foretelling the end of Moore’s Law for decades before it actually slowed down, because new ideas cannot be predicted. But even if the full capacity of fiber is being approached, there is certainly nothing that will replace fiber with any better bandwidth. So fiber optics will remain the core technology of the internet for the foreseeable future.

But what of the other generations of Machines of Light: the all-optical and the quantum-optical generations? How have optics and photonics fared in those fields? Stay tuned for my next blogs to find out.

By David D. Nolte, Nov. 8, 2021

Bibliography

[1] P. J. Winzer, D. T. Neilson, and A. R. Chraplyvy, “Fiber-optic transmission and networking: the previous 20 and the next 20 years,” Optics Express, vol. 26, no. 18, pp. 24190-24239, Sep (2018) [Link]

[2] W. Shi, Y. Tian, and A. Gervais, “Scaling capacity of fiber-optic transmission systems via silicon photonics,” Nanophotonics, vol. 9, no. 16, pp. 4629-4663, Nov (2020)

[3] E. Agrell, M. Karlsson, A. R. Chraplyvy, D. J. Richardson, P. M. Krummrich, P. Winzer, K. Roberts, J. K. Fischer, S. J. Savory, B. J. Eggleton, M. Secondini, F. R. Kschischang, A. Lord, J. Prat, I. Tomkos, J. E. Bowers, S. Srinivasan, M. Brandt-Pearce, and N. Gisin, “Roadmap of optical communications,” Journal of Optics, vol. 18, no. 6, p. 063002, 2016/05/04 (2016) [Link]